Validated GRPs (vGRPs) are a very powerful KPI to track the digital advertising effectiveness. Let’s think about the current use of the traditional online GPRs in marketing mix models. Allowing non-validated impressions into the model presents not only opportunity for error but also a biased evaluation of the medium’s ability to drive sales.

It is due to the traditional online GRP model, which does not take into account for whether or not an advertising was seen, therefore a significant number of zero-value impressions are inherently included in the GRP calculation. This leads to zero or low ROI estimates and, fundamentally, renders comparisons between online GRPs and offline GRPs (in which all ad impressions are viewable) illegitimate.

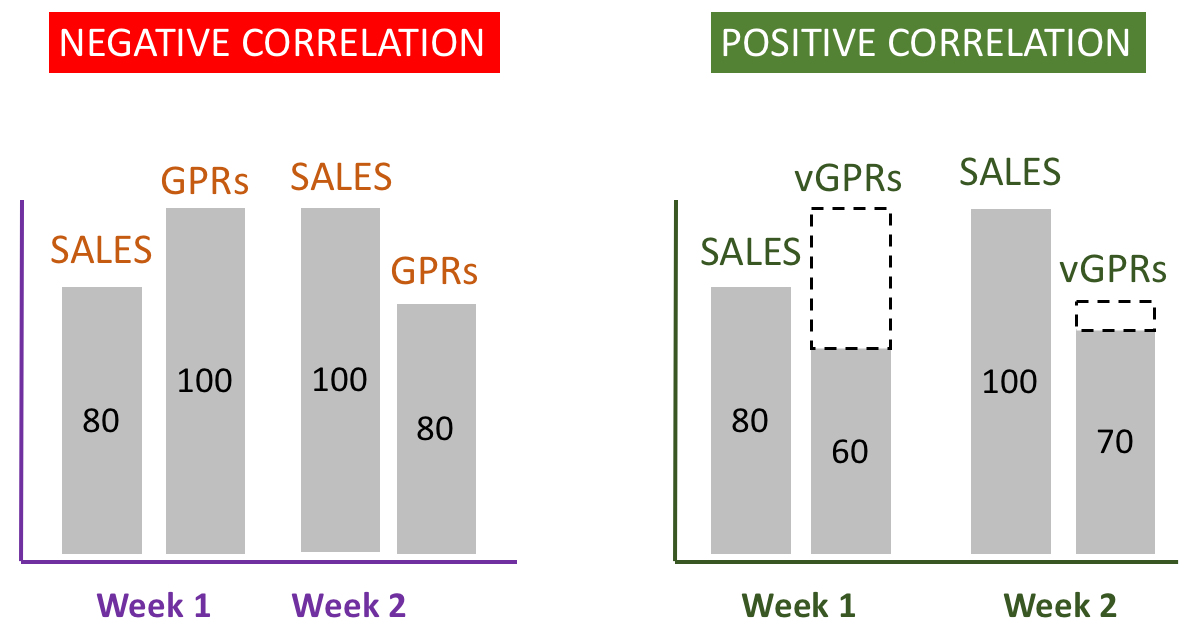

For example, imagine a scenario in which there is a negative correlation between gross online GRPs and sales, as illustrated on the left hand side of Figure 1. From week to week, sales go up but GRPs decrease from 100 in week 1 to 80 in week 2. It seems to be a negative correlation. Through the vGRP lens, however, the relationship between advertising and sales looks quite different. In week 1, 60 of the 100 GRPs are validated as compared to 70 in week 2. In other words, if we take into consideration only vGRPs, advertising and sales move in the same direction, showing a positive correlation. In other words, more accurate measurement of causal variables (reducing the signal-to-noise ratio) enables more accurate measurement of the impact of those variables. By this example, you can see how marketers would draw very different conclusions from marketing mix models that are based on gross GRPs as opposed to vGRPs when assessing the impact of digital advertising.

Figure 1

Bear in mind, even when correlation reversals don’t occur, statistical theory has documented a negative estimation bias. They call it as “Attenuation Bias” or “Regression Dilution“, which becomes larger as the error in the causal variable is larger. Moreover, gross GRPs are polluted by a substantial amount of noise and are likely to introduce a large downward bias in marketing mix models, to the detriment of both the publisher and the advertiser because the result is an under-estimation of the effects of digital advertising.

Even in case of application of the brand lift model (Control Group vs Exposed Group), the people who are served the advertising (Exposed Group) never actually have an opportunity to see it (i.e. the delivery was not validated). Because the advertising is never seen and, therefore, don’t have a chance to make an impact, the responses of these individuals are likely to be similar to others who never see the advertising (Control Group). Including them in the lift calculation for the Exposed Group will likely suppress the brand lift calculation. In reality, these consumers should be included in the Control Group.

When we remove these consumers from the Exposed Group, thus measuring the brand lift based on validated impressions, the branding impact is bigger. When using validated impressions rather than served impressions to understand who was actually exposed, publishers and advertisers enjoy a more accurate view of the campaign’s effectiveness. In most cases, this more precise view will mean higher lifts for campaigns.

From all this analysis we see that the use of validated impressions (vGPRs) as the input into campaign measurement creates value for each of the key stakeholders in the advertising value constellation:

- Publishers

- Agencies

- Advertisers